When Machines Become the Lead Scientists: What AI Scientists Mean for Cyberpunk Culture and Industry

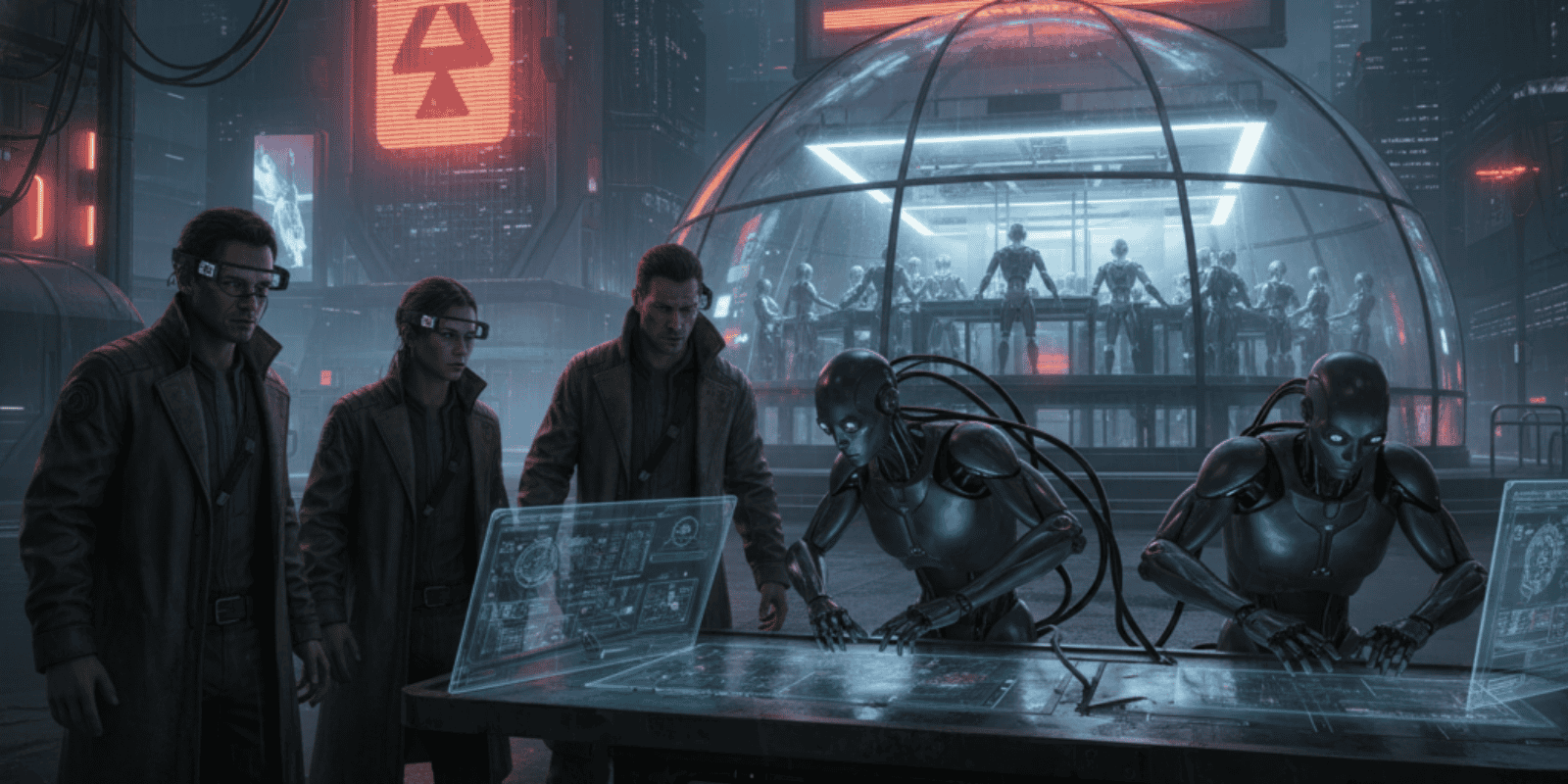

In a neon-lit basement lab a programmer watches a robot pipette, the glow of a monitor reflecting in safety goggles. Outside, a corporate biotech tower broadcasts its quarterly triumphs in holographic type; inside, a community scientist debates whether algorithms are liberators or new foremen.

Most commentary frames AI scientists as productivity tools that will speed discovery and cut costs for Big Pharma and elite labs. The overlooked reality is more granular and more combustible: the arrival of agentic research engines rewrites who owns experiments, who gets credit, and how power circulates between megacorps, boutique labs, and street-level biohackers — a shift with practical consequences for small teams and subcultures that style themselves on urban futurism.

Why corporations and street hackers are both paying attention

AlphaFold’s leap to near-instant protein structures reset expectations about what software can do for biology, and industry has not forgotten the lesson. DeepMind and EMBL’s release of large protein structure databases in 2021 created an infrastructure that scaled to hundreds of millions of predictions in short order, turning months of bench work into a query box and an afternoon of exploration. (embl.org)

At the same time, the first robotic scientists proved a conceptual point a decade and a half ago: machines can generate hypotheses, run assays, and validate results with minimal human choreography. That lineage from Adam to modern automated platforms matters because it established plausible blueprints for fully autonomous research agents. (cam.ac.uk)

A brief history that reads like a cold case file

Early demos such as Adam and Eve began as clever automation and ended up revealing uncomfortable truths about reproducibility and human variability in the lab. Those experiments were as much sociology as engineering; they showed machines could reduce human error and bias in repeatable ways, even as they exposed the limits of human oversight. (cam.ac.uk)

The next chapter accelerated when machine learning helped find new drug leads off databases of millions of compounds, cutting screening times that once took years down to days. That work gave companies a commercial template for AI-driven discovery and shifted R and D calculus across the industry. (news.mit.edu)

The core story: speed, scale, and the new research stack

Self-driving laboratories and multiagent AI pipelines now stitch together simulation, synthesis, and physical experimentation into continuous cycles that do not require an eight-hour human shift. Autonomous labs can optimize thousands of experimental variables overnight and converge on promising leads with orders of magnitude less labor. The technical literature and policy reviews show this is not hypothetical but an operational model rolling out across pharma and research institutions. (pmc.ncbi.nlm.nih.gov)

For cyberpunk culture the practical effect is immediate and visual: downtown hacker spaces trade soldering irons for microfluidic chips, corporate logos in glass towers buy dominance in datasets, and a new market opens where models, lab time, and hardware are the scarce commodities. It is less Blade Runner and more subscription economy for discovery, with neon accents.

Machines will not overthrow scientists; they will outwork them and then invoice for the privilege.

How this rewires cyberpunk aesthetics and labor

The archetypal cyberpunk image merges grime with high tech and distrust of corporate power. AI scientists invert that image into a dividend calculation: which actor captures the outputs of autonomous discovery, and who pays the licensing fee? If a community lab uses an open model to design a novel enzyme, the downstream value chain may still be captured by whoever controls synthesis, scale, and regulatory channels. That tension fuels both mainstream fear and underground ingenuity.

A Ryan Reynolds-style observation is permitted here: when laboratories are 24 to 7 and never need coffee, the office debate about artisanal espresso suddenly feels less relevant and oddly quaint.

Practical math for businesses with 5 to 50 employees

A small synthetic biology studio that currently pays a contract lab $10,000 per month for screening can, with access to a shared automated platform, cut those costs by 40 to 60 percent while tripling throughput. On a simple annualized basis, a cut from $10,000 per month to $5,000 per month saves $60,000 a year. If the faster pipeline shortens time to prototype from 18 months to 6 months, the value of earlier market entry or fundraising can easily exceed that savings by several multiples.

For a boutique materials firm with 20 employees, licensing access to an AI-assisted discovery API at $2,500 per month instead of hiring two junior chemists at $80,000 each creates a near-term cashflow advantage but concentrates technical knowledge off-premises. That tradeoff reduces headcount costs by roughly $137,500 in the first year after accounting for payroll taxes and benefits, but it increases vendor dependency — a business decision, not an ethics lecture.

The cost nobody is calculating

Ownership, oversight, and supply chains become the hidden line items. When AI scientists output candidate molecules or designs, regulatory and IP pathways still require wet lab validation and legal counsel. Those compliance costs scale with the perceived novelty of the discovery and frequently exceed the computational savings. Small teams that save on screening may still owe six figures to attorneys and contract manufacturers later, a gap that founders often miss until grant deadlines pass.

Also, data curation and model bias are financial liabilities. Models trained on datasets owned by large institutions will reflect their blind spots and legal restrictions, meaning that relying on a single provider can introduce systematic error patterns into results that only show up after costly validation.

Risks, governance, and the questions no one dares to bill

Autonomous agents amplifying errors at scale create new systemic risks. A flawed objective function can push a lab to optimize for an artifact rather than a biological mechanism, producing plausible but useless or unsafe outputs. Governance frameworks are catching up, but regulatory authorities and community labs are often out of sync; historical examples show that oversight lags use by years.

The cultural risk is fragmentation. If corporate players close-source high-performing agentic tools, underground labs will bifurcate into a legally compliant cohort and an experimental cohort that trades in gray markets. That is not a dystopian punchline; it is a predictable market segmentation.

What small teams should do next

Budget for validation, not just model access. Negotiate data portability in any vendor contract to avoid lock-in. Build an internal road map that treats autonomous discovery as a tool that accelerates hypothesis generation but still requires human judgment for safety and commercial decisions. If community reputation matters, plan public reproducibility audits to win trust and avoid whistleblower spectacles.

Looking ahead with usable insight

AI scientists will not erase the need for human expertise, but they will reallocate premium value toward interpretive skill, regulatory savvy, and the ability to assemble multiagent workflows. Businesses and creative communities that treat these systems as new instruments rather than replacements will gain the most.

Key Takeaways

- AI scientists are shifting value from manual screening toward data ownership, model access, and regulatory execution.

- Small teams can save tens of thousands of dollars yearly but must budget the same or more for validation and compliance.

- Cyberpunk cultures will split between open experimentalists and corporate-controlled discovery ecosystems.

- Governance and data portability are the critical practical levers for preserving autonomy.

Frequently Asked Questions

Can a small biotech replace lab staff with AI scientists and save money?

AI can reduce routine screening labor and lower costs in the short term, but validation, compliance, and scale-up still require human skills and present nontrivial expenses. A hybrid model usually delivers the best economics and risk profile.

What should a community biohacker space worry about first?

Liability and safety protocols are primary; using autonomous tools raises questions about traceability and reproducibility that can attract regulatory attention. Investing in transparent audit trails and community governance mitigates exposure.

Will corporations lock down the best AI scientists behind paywalls?

Some players will close-source components to capture value, but open databases and academic releases have proven resilient and influential, creating alternative ecosystems. Contracts that demand data portability and open standards reduce vendor lock-in risk.

How soon will an AI-discovered drug reach the market?

AI can accelerate early discovery phases significantly, but clinical trials, manufacturing, and regulatory approvals still take years. The timeline compression mostly reduces preclinical time and costs rather than eliminating clinical risk.

Is the DIY scene likely to create dangerous biological agents using AI?

Historical patterns show real risk but also strong community norms and safety efforts; governance and responsible disclosure are the practical levers to reduce misuse. The balance between openness and oversight will shape outcomes.

Related Coverage

Explore stories about autonomous laboratory infrastructure, the ethics of dataset ownership in biotech, and the economics of open versus closed models for AI-driven discovery on The AI Era News. Readers interested in the aesthetics of urban futures should also look into coverage of biohacker communities and the emerging marketplace for lab-as-a-service.

SOURCES: https://news.mit.edu/2020/artificial-intelligence-identifies-new-antibiotic-0220, https://www.cam.ac.uk/research/news/robot-scientist-becomes-first-machine-to-discover-new-scientific-knowledge, https://www.embl.org/news/science/alphafold-database-launch/, https://www.ncbi.nlm.nih.gov/pmc/articles/PMC12368842/, https://www.theguardian.com/technology/2009/mar/19/biohacking-genetics-research