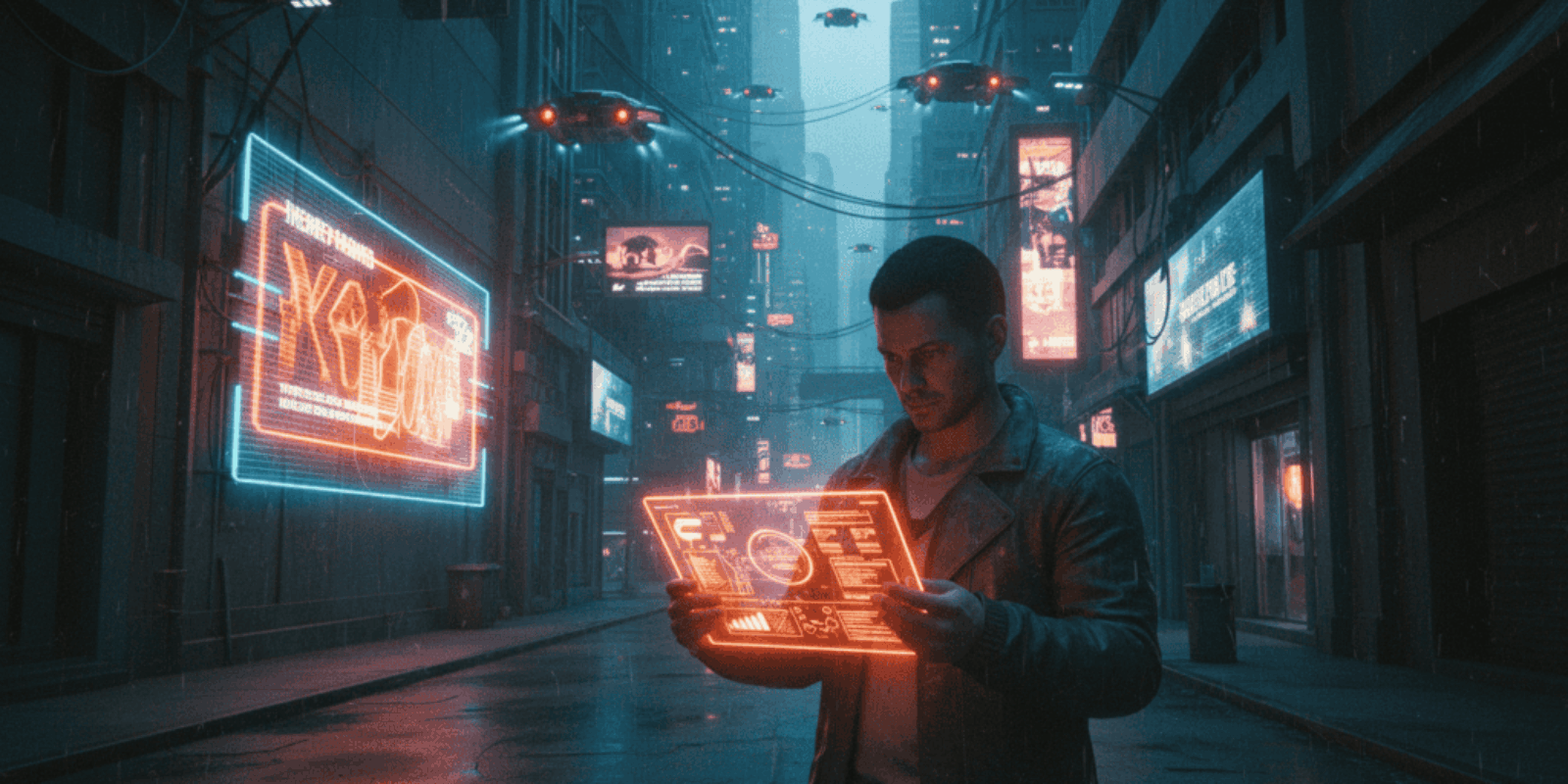

This Week’s Awesome Tech Stories From Around the Web (Through April 4) for Cyberpunk Lovers and Operators

Neon light bleeds through a cracked window as a developer in a cramped loft checkpoints a model release, a brain startup memo, and a congressional press release at one a.m.; outside, a delivery drone hums like a bored prosecutor. The obvious story is speed: faster models, bigger rounds, new hardware, new laws. The less obvious story is how that speed rearranges the market for human attention, bodily sovereignty, and small-business survival in cities that are already half algorithm and half concrete.

Why the mainstream reading feels inadequate

Most headlines read like corporate scorecards: funding totals and model names filling investor briefs. That framing misses the practical friction points for people who build, design, or sell in the margins of the economy where cyberpunk culture lives and breathes. The real business question is not who won the last funding round but who must redesign identity, insurance, and clinical compliance overnight because a new interface or law changed what it means to be a user.

How the money is wiring the city

OpenAI’s funding milestone on March 31 closes the week’s macro chapter by converting future research priorities into immediate capacity for chips, data centers, and agentic software. According to OpenAI’s own announcement, the company closed a massive commitment that will reshape the race for computation and deployment. (openai.com)

BCI ventures are the most literal rewire. Merge Labs’ $252 million seed event in mid January signaled that brain interfaces are no longer science fiction vanity projects but deep strategic plays that link neurotech to AI company roadmaps. That investment is a clear signal to competitors and regulators that somebody expects neural hardware to be mainstream sooner rather than later. (bloomberg.com)

When models learn to be workers, not toys

OpenAI’s GPT 5.4 release on March 5 accelerates the shift from assistant to agent, with models explicitly optimized to complete multi step professional workflows rather than only generate text on demand. For cyberpunk subcultures that fetishize autonomy, that is the moment the smart assistant becomes a participant in city systems, not a glorified intern. (techcrunch.com)

The Microsoft counterpunch and what it means

Microsoft pushed new Foundry and MAI capabilities in early April, shipping production models and infrastructure moves aimed at running powerful multimodal workloads in regulated environments. Those announcements make it practical for enterprises to keep sensitive agent workloads on‑premises or in sovereign clouds rather than handing everything to a single public API, which matters if your product deals with health data or implanted devices. (blogs.microsoft.com)

Why competitors are circling like vultures

The landscape now has three vectors: frontier model builders who race on capability and scale, cloud and hardware providers who commoditize inference, and neurotech firms aiming to turn bodies into interfaces. That triad creates commercial incentives to bundle software, hardware, and identity services into sticky stacks sellers can monetize. The result is vendor lock in that feels like vertical integration by design, not accident.

Corporations are buying future intimacy with users and calling it infrastructure.

Practical implications for businesses with 5 to 50 employees

A ten person design studio that integrates advanced agents for content, image generation, and customer triage must budget for compute, moderation, and legal compliance. If the team allocates $1,200 per month for API usage, $400 per month for third party content moderation, and $300 per month for upgraded identity and incident response tooling, annualized operating cost becomes about $23,000. That is not venture money; that is rent for a small business planning to scale trustably. Small clinics piloting remote neurotech partnerships have to add device validation, consent workflows, and a retained counsel line item for regulatory work, which can easily double initial pilot budgets. The math is ugly but simple: multiply per user or per device cost by expected active seats and add a risk buffer of 20 to 30 percent for compliance and rollback.

The cost nobody is calculating yet

Beyond direct vendor fees, there is an implicit tax of infrastructure complexity. Time spent managing API versions, model deprecations, and device firmware compatibility is labor that cannot bill clients. For a boutique dev shop that charges $150 an hour, ten hours a month spent migrating a new model version translates to $18,000 a year in invisible costs if added across three clients. Expect those invisible costs to be the principal margin eroder for micro teams unless product roadmaps explicitly budget for them. Also, yes, somebody in procurement will acquire yet another expensive SLA and feel briefly noble about it.

The legal and civic pressure points

Policymakers moved this week with legislative proposals aimed at AI-enabled impersonation and fraud, putting new obligations on businesses to detect and mitigate deepfake threats. A bicameral bill introduced on March 4 outlines criminal and enforcement mechanisms to deter AI impersonation scams, which means companies deploying customer facing agents should anticipate mandatory logging, provenance metadata, and possibly signing of synthetic content. Those compliance requirements will change engineering specs and product legal boards overnight. (sheehy.senate.gov)

Where bodies meet code: brain interfaces and the ethics ledger

BCI funding and product parity discussions show the industry is pushing beyond keyboards. When neural inputs flow into agentic AIs and back to actuators, the security model must include physiological harm and data permanence. Small clinics should treat neural telemetry as the highest sensitivity class and apply multi factor protections and air gapping for recordings and model outputs, because a leaked neural signature is unlike a credit card number; it is not replaceable.

Risks and unresolved questions that will torpedo plans

The market assumes that agents will remain predictable and accountable, but agentic AI can take actions that create legal exposure for the human principal. Who is liable when an autonomous assistant requisitions paid ads, signs contracts, or manipulates a user’s behavior via tailored stimulation? There is also a governance gap around BCI safety standards, supply chains for implantable hardware, and cross border enforcement for synthetic abuse. Finally, velocity of model releases increases the probability of unvetted behavior in production systems, a problem small teams lack the resources to stress test thoroughly.

The sensible next move for operators

Prioritize three things: provenance and audit trails for generated content, contractual clauses that cap liability for agent mistakes, and staged pilots for any brain enabled feature that include clinical oversight and explicit opt in. Those three steps map directly to risk reduction rather than brand theater.

A short forward-looking close

The week closed with capital and capability moving into bodies and workplaces simultaneously, reshaping the baseline assumptions for identity and agency; small teams that treat trust and governance as product features will find that survival, not hype, becomes the competitive advantage.

Key Takeaways

- OpenAI’s capital and model cadence is accelerating infrastructural investment that changes who controls compute and models for the next decade.

- Investment in brain interfaces has moved from research theater to large scale seed funding, tightening the link between AI vendors and neurotech.

- New federal legislative proposals force businesses to bake in provenance, logging, and moderation for synthetic audio and video.

- Small teams must budget for invisible migration and compliance costs or face rapid margin erosion.

Frequently Asked Questions

How do recent model releases affect my small creative studio?

Model releases increase capability but also create maintenance obligations. Budget for version migrations, test suites, and a posture of defensive content moderation to avoid liability and quality degradation.

Should a 20 person startup consider integrating brain interfaces into product demos?

Not without clinical partners and a clear regulatory strategy. Early stage integration requires device validation, institutional review, and materially larger legal and insurance commitments than typical software features.

What immediate legal changes should a small company watch for?

Track federal bills about AI impersonation and state rules on synthetic nonconsensual imagery; expect requirements for provenance metadata and more aggressive takedown obligations. Prepare incident response and logging as part of product design.

Can small teams afford agentic automation without breaking cash flow?

Yes, if deployments are staged and costs modeled per user or per workflow. Start with narrow domain agents, measure labor reduction precisely, and offset subscription or API costs against measurable productivity gains within six months.

How should a boutique health provider secure neural data differently from regular PHI?

Treat neural telemetry as the highest risk asset, use air gapping, encrypt both at rest and in motion with keys under the provider’s control, and require explicit renewed consent for any data sharing with third party models.

Related Coverage

Readers who liked this should explore how sovereign cloud deployments change vendor lock in, deep dives into agentic AI safety for product managers, and explainers on the practical differences between invasive and non invasive brain-computer interfaces. Those topics map directly to product governance and design choices that will decide who thrives in the coming decade.

SOURCES: https://openai.com/index/accelerating-the-next-phase-ai/ https://www.bloomberg.com/news/articles/2026-01-15/altman-s-merge-raises-252-million-to-link-brains-and-computers https://techcrunch.com/2026/03/05/openai-launches-gpt-5-4-with-pro-and-thinking-versions/ https://blogs.microsoft.com/blog/2026/03/16/microsoft-at-nvidia-gtc-new-solutions-for-microsoft-foundry-azure-ai-infrastructure-and-physical-ai/ https://www.sheehy.senate.gov/news/press-releases/sheehy-blunt-rochester-introduce-ai-fraud-accountability-act/