New AI-Powered Robot Can Destroy Human Champions at Ping Pong and What That Means for the AI Industry

Sony’s Ace did more than win points; it compressed years of robotics research into a single, televised moment—and the implications for embodied AI are quietly huge.

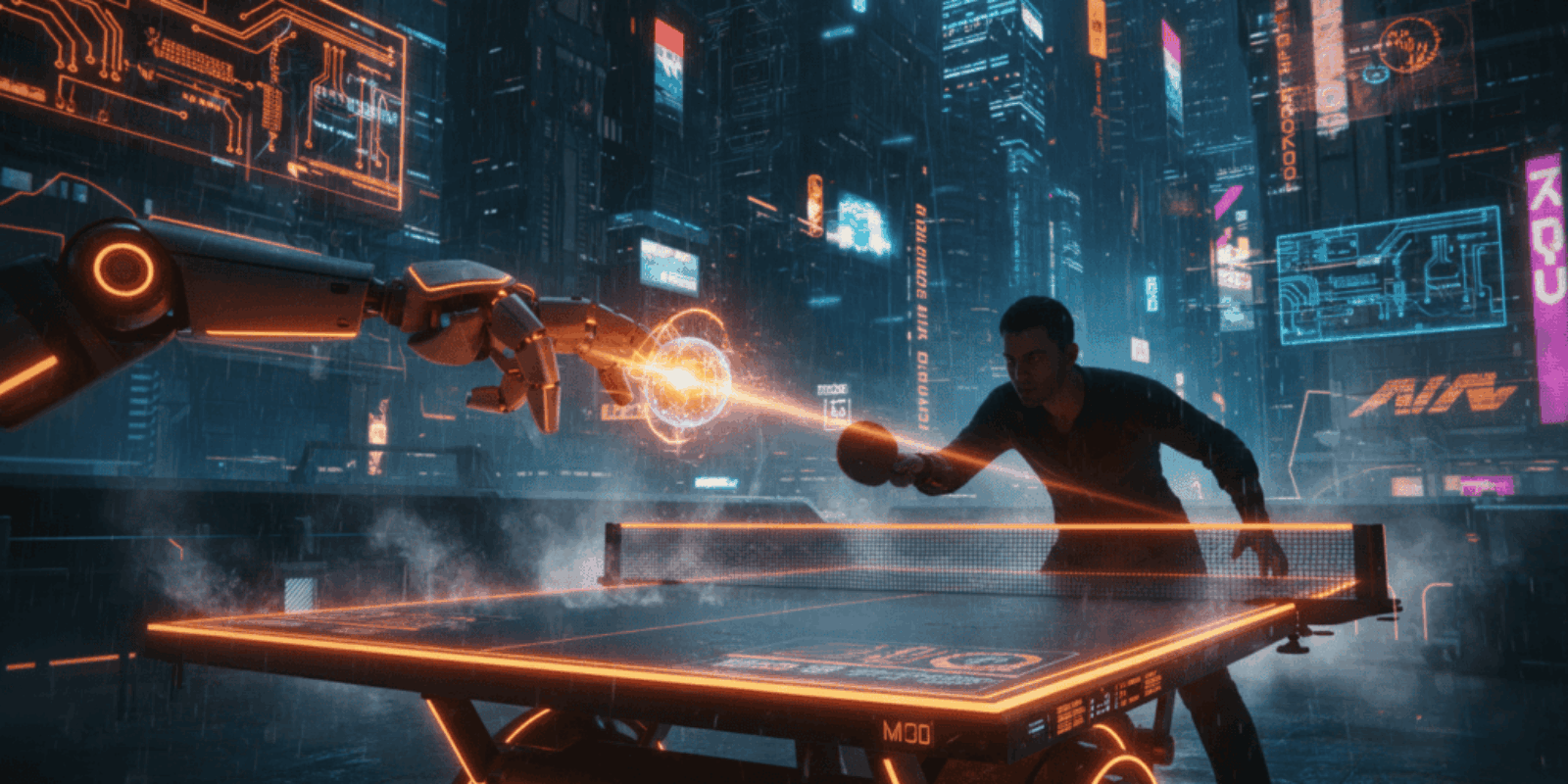

The first serve felt like a trick pulled by a magician who knows all the cards. Under bright Tokyo lights a plastic ball spun, dipped, and vanished toward a corner; a machine with nine camera eyes snatched it back with surgical calm. Spectators felt the same small vertigo that comes when a calculator first does something a human thought only humans could do.

Most headlines framed this as a technical milestone, the familiar breathless celebration of a robot beating a human at a sport. That reading is correct but shallow; the more consequential story is how techniques proven on a ping pong table migrate into systems that must sense, decide, and act in the messy, fast-moving physical world where companies actually have to make money. The reporting leans heavily on Sony’s Nature paper and accompanying press materials, which is why the industry must treat this both as a demonstration and as a roadmap for productizable capabilities. (nature.com)

A sport that has always boxed above its weight in robotics

Table tennis has been a benchmark for robotic perception and control since the 1980s because the sport packs extreme speed, complex spin, and tiny margins into a small, repeatable setting. Sony’s Ace is the latest—and most convincing—iteration of that lineage, and it joins a cluster of recent achievements that shift robotics from scripted automation to adaptive, adversarial performance. The Los Angeles Times placed the work in the context of a steady parade of robotic athletic feats and noted that most of Ace’s matches took place in 2025 under official rules. (latimes.com)

Why every AI product team should stop and watch this closely

Beyond the applause, the important detail is not that a robot can win a match; it is that the system stitches together perception, simulation-driven reinforcement learning, and hardware tuned to millisecond response times. That technology stack maps directly to commercial problems where latency and unpredictability are what kill throughput and safety. The same sensing and control principles that let Ace read spin could cut error rates in automated warehouses, speed robotic surgery tools, or let delivery robots negotiate crowded sidewalks with fewer collisions.

How Ace actually worked during those matches

In April 2025 Sony evaluated Ace in matches against elite and professional players following International Table Tennis Federation rules. The Nature paper reports that Ace won three of five matches against elite players and put up competitive results across the board, outcomes researchers emphasize are the result of new perception systems and reinforcement learning rather than brute force speed. The team built an Olympic-sized court and ran experiments under licensed officiating to avoid the “rules-lite” trap that weakened earlier demonstrations. (apnews.com)

The three technical pivots that mattered most

Ace pairs event-based vision with conventional cameras, uses a privileged critic during simulated training, and runs model-free reinforcement learning policies at match time. Those policies sample from a bank of skills at about 31.25 hertz and map abstract actions into collision-aware motions. In plain language: Ace learned a repertoire of tactics in simulation, then used ultra-fast perception to pick and execute the right one in the real world. Tech reporters highlighted how the robot follows the ball logo to estimate spin, a detail that sounds nerdy and is actually the difference between a predictable robot and one that can cope with trick shots. (techradar.com)

The business math: where this becomes money, not theater

Imagine a fulfillment center where a robotic arm currently completes 400 picks per hour with 0.5 seconds of unplanned delay per pick due to perception errors. If Ace-level perception reduced that delay by 0.3 seconds, throughput rises by roughly 20 picks per hour, a 5 percent uplift per arm. Scale that to 50 arms across a site and 250 shifts per month and the annual labor equivalent becomes nontrivial. These are back-of-envelope numbers, but they show why product teams should budget for not only better models but also more expensive sensors and tighter control loops when aiming for real productivity gains. A cheaper camera save can be irrelevant if perception error causes a five minute stop. The point is not heroic numbers; it is that the cost equation pivots toward sensor and compute investment once systems escape the lab.

Ace is less a party trick and more a blueprint for autonomous systems that must perceive minute physical cues and act in milliseconds.

Why competitors and incumbents will respond fast

The Ace architecture is modular. Perception improvements, simulation curricula, and control mappings can be reused or adapted across industries. Robotics firms, cloud providers, and chip vendors see a runway: better event cameras, lower-latency inference on edge accelerators, and stricter real-time guarantees in middleware. Expect partnerships between industrial robot suppliers and AI labs, and expect the usual patent fences to sprout. The real contest will be commoditizing the training data pipelines and safety layers that businesses require before they deploy these systems at scale.

The cost nobody is calculating yet

Most commentary focuses on algorithmic novelty, but the hidden bill will be hardware integration and ongoing maintenance. High-speed cameras and custom actuators increase CapEx, while the simulation-to-real pipeline demands continuous data collection and retraining, which drives OpEx. For many midmarket firms there is a tradeoff: buy a packaged, safer but slower solution, or invest in an Ace-like stack to chase efficiency. That choice will define winners over the next five to ten years.

Risks and open questions that will decide adoption speed

Key technical questions remain about generalization outside controlled courts, long-term wear and tear on specialized actuators, and how these systems fail when sensors are occluded. There are also social risks: worker displacement in narrow roles, and dual use concerns when high-speed perception is repurposed for surveillance or military applications. Public policy and procurement rules will likely shape deployment cadence more than pure engineering constraints. The Washington Post highlighted the dual-use nature of the technology and the need for measured governance as systems migrate from demonstration to deployment. (washingtonpost.com)

A short practical close for product leaders

Treat Ace as a technology demonstrator and a procurement warning. Teams should begin evaluating where low-latency perception and closed-loop control could materially reduce costs or unlock products that humans cannot safely deliver at scale. Start with narrow pilots that define clear safety and ROI metrics, and plan sensor and compute budgets accordingly.

Key Takeaways

- Sony’s Ace demonstrates that high-speed perception plus reinforcement learning can produce reliable, competitive real-world behavior in physical tasks.

- The technical advances on the ping pong table map directly to industrial and service robotics where latency and unpredictability are central costs.

- Commercial adoption will hinge on hardware integration costs and robust safety layers more than on algorithm novelty.

- Product teams should run small, measurable pilots to understand sensor, compute, and maintenance tradeoffs before broad deployment.

Frequently Asked Questions

How soon could companies adopt this level of robot perception in warehouses?

Adoption depends on industry risk tolerance and margins; early pilots could appear in 12 to 24 months if vendors package perception and control as turnkey modules. Integration and safety certification will be the primary bottlenecks, not the core AI algorithms.

Will this technology take jobs away from humans in logistics?

It will automate specific, repetitive tasks and augment many others, shifting labor toward oversight and exception handling. Net job impacts depend on sector growth, regulatory responses, and how quickly reskilling occurs.

Do companies need to build their own simulations to benefit from these advances?

Not necessarily. Third party simulation platforms and pretrained policy banks will emerge, but firms doing novel tasks will still need custom simulation curricula to cover domain specifics and edge cases.

Is the technology safe enough for human interaction right now?

Safety in research demos is different from industrial safety certification. Businesses should expect months of additional engineering for redundancy, formal verification, and regulatory compliance before human-adjacent deployment.

Will smaller robotics firms get left behind?

Smaller firms can succeed by specializing in integration, domain expertise, or niche sensors rather than competing head-to-head on end-to-end stacks. Partnerships and white-labeling of perception modules will level the playing field.

Related Coverage

Readers who followed this story may want to explore how event-based cameras change the economics of edge AI, the rise of simulation-driven reinforcement learning in control systems, and the intersection of robotics and procurement law. Each of these topics explains a piece of how a ping pong robot turns into a practical product.

SOURCES: https://www.nature.com/articles/s41586-026-10338-5 https://apnews.com/article/ai-table-tennis-robot-ping-pong-sony-995b239945e0dc8d7bea918a850969dc https://www.techradar.com/ai-platforms-assistants/it-totally-blew-my-mind-sonys-project-ace-robot-plays-ping-pong-better-than-the-pros-and-could-mark-a-major-robotics-turning-point https://www.washingtonpost.com/business/2026/04/22/ai-table-tennis-robot-ping-pong-sony/2d62f682-3e5c-11f1-bb46-ed564688d953_story.html https://www.latimes.com/sports/story/2026-04-23/ai-milestone-ping-pong-robot-ace-beats-human-players-baseball-next-nature-study