Devious Prankster Posts Real Monet Painting, Tells People It Is AI Generated, and Watches the Chaos Unfold

When the internet was asked to critique an “AI Monet,” it accused the real painting of having no soul. The joke was meaner than the punchline.

A user pasted a Claude Monet water lilies image into a social feed, tagged it as AI-made, and invited critique. The thread filled with confident diagnoses of generative model flaws and polite moral outrage, which made the stunt feel less like comedy and more like a stress test of online trust. (petapixel.com)

The obvious headline is that social media remains terrible at distinguishing signal from label. The underreported commercial angle is that this reflex is now a risk vector for any cyberpunk-themed business that trades in aesthetics, provenance, or authenticity; the real cost is reputational and operational, not merely rhetorical. (startupfortune.com)

Why the prank landed harder than the prankster expected

The prank worked because the internet has built a default skepticism that treats the label “AI-generated” like a criticism. People scan for the telltale artifacts and then project a whole theory about authorship and value. When that theory is wrong, the social corrections do not arrive gently. Platforms amplify certainty faster than correction. (english.dotdotnews.com)

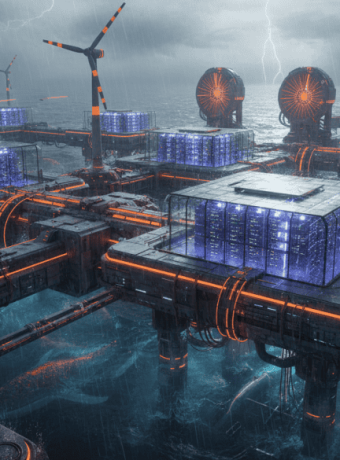

For cyberpunk communities that prize aesthetic authenticity and anti-establishment cred, that reflex is a contagion. If a calculated claim about an image can flip consensus, then curated visuals, NFTs, gallery listings, and in-world lore can all be weaponized. The stunt is a small experiment with very large implications for trust economies.

How this intersects with the art market and forgery detection

The art world has already been battling both low-tech fakes and high-tech confusion; machine learning tools are now used to spot forgeries even as generative models make mimicry easier. Museums and online marketplaces have struggled to keep provenance clear, and instances of fake Monets on auction sites show the real-world stakes of visual doubt. This prank sits at the intersection of those problems. (theguardian.com)

Cyberpunk aesthetics trade on the blurred line between analogue grit and hyperdigital shimmer. When that line becomes politically weaponized online, curation becomes a liability and authenticity becomes expensive. Expect collectors, curators, and brand managers to start budgeting for verification in ways they did not before.

The social mechanics the prank exposed

The post invited commentary by asking users to explain why the “AI image” was inferior. People obliged with intricate art historical takes, technical jargon, and moral judgments about automation replacing craft. The replies turned into a mirror that reflected more about online culture than about Monet. (petapixel.com)

A second-order lesson is that labels exert control. Say “AI” and observers look for shorthand flaws; say “authentic” and people look for pedigree. For cyberpunk brands using generative visuals, the same label choices will shape buyer instincts and press cycles.

The cost nobody is calculating for small creative businesses

A freelance studio of 5 to 10 people that sells cyberpunk prints and commissions might see a 10 percent drop in conversion if a prominent reviewer publicly doubts authenticity. If the studio averages 200 sales per month at 60 dollars each, that is 1,200 dollars lost monthly in revenue. Add a 300 dollar monthly subscription to a provenance and watermark verification service and a part-time employee at 20 hours a month to manage disputes at 25 dollars per hour, and the monthly hit rises to about 1,800 dollars. Those are real numbers that determine whether a microgallery survives a viral rumor. The math is boring and persuasive, like a tax code with a neon sign.

A 20 person creative agency that licenses cyberpunk assets for film and games faces a different calculus. Losing a single enterprise client over provenance concerns can cost 30,000 dollars to 100,000 dollars in annual recurring revenue. The downstream costs include legal fees to contest misattribution and the loss of future collaborations, which digital reputation systems are not yet designed to quantify.

Practical steps for businesses with 5 to 50 employees

Start by embedding provenance metadata into assets at creation and maintain a simple hashing workflow that links public content to private records. Offer buyers verifiable receipts and require basic authentication for high-value commissions. Make a small contingency fund for reputation management equal to one month of payroll; the price of silence on a rumor is often higher than the cost of a calm correction.

If a post accuses a piece of being AI-made, publish the creation files, timestamps, and payment receipts within 24 hours to blunt speculation. It will feel awkward to publish internal documents, but silence is what turns mistakes into campaigns.

The platforms that make this worse and why timing matters

Social feeds reward certainty, not nuance, so quick accusations gain traction and corrections lag. That dynamic is the same mechanism that enables misinformation to spread about politics, products, and people. Tech platforms have a moderation problem that is also a business risk for any brand leaning into speculative or retro-futurist aesthetics. (washingtonpost.com)

Competitors in online content verification and provenance tracking are racing to offer solutions. Expect startups and established players to pitch easy integrations for galleries and studios, but beware vendor lock-in and opaque machine learning claims. The timing is now because visual generative tools are mainstream and consumer skepticism has hardened into habit. (startupfortune.com)

Risks and hard questions that stress-test the claims

Can platforms reliably label content without introducing new biases? What happens when a verified provenance system is gamed by bad actors with inside access? Will liability shift to platforms, creators, or verification vendors if a mislabeling incident destroys a business relationship? These are operational risks that require legal counsel and realistic insurance conversations.

There is also a cultural risk. If every aesthetic claim requires forensic certifying, the underground inspiration that fuels cyberpunk may calcify into compliance documentation. It would be tragic if rebellion became a checkbox.

If a crowd convinced it was correct can misidentify a Monet while sipping coffee, imagine what it can do to your brand image at 2 a.m. on a Friday.

What businesses should budget for right now

Budget for basic verification tools, a small reputation response team of one to two people, and a legal retainer that can be called for rapid takedown or clarification letters. For a 5 to 50 person business this is not heroic spending. A 500 to 1,500 dollar monthly line item buys significant protection and peace of mind. Think of it as insurance against a crowd that prefers certainty to curiosity.

Forward-looking close

The prank was a blunt instrument that revealed a soft underbelly of online visual culture; the next step is to treat verification as design, not as afterthought, and to build small defenses that preserve creative risk without surrendering to rumor.

Key Takeaways

- Small creators should assume any public image may be labeled and budget 500 to 1,500 dollars per month for verification, response, and legal readiness.

- Labels shape perception more than pixels do; communicate provenance proactively to control narratives.

- Platforms amplify certainty faster than corrections; rapid, transparent documentation is a practical defense.

- The cost of silence after a misattribution often exceeds the cost of immediate clarity.

Frequently Asked Questions

How can a 10 person cyberpunk studio prove an artwork is not AI generated?

Keep original project files, time-stamped edits, layer histories, and receipts for materials or software. Share a selective proof package with clients that includes hashes and a private verification portal to avoid exposing sensitive IP.

What is the cheapest effective provenance method for small galleries?

Use a digital watermarking service with asset hashing and a ledgered timestamp record, which can start as low as 100 dollars a month. Combine that with clear sales receipts and signed authenticity statements to make disputes easier to resolve.

Will platforms start labeling all images as AI or not AI for liability reasons?

Platforms are experimenting with soft labels and provenance indicators, but broad mandatory labeling is unlikely without regulation. Businesses should plan for mixed signals and own their verification instead of relying entirely on platform badges.

If a viral post accuses a piece of being AI, what should a small business do first?

Publish verifiable creation metadata, call out the factual record calmly, and route inquiries to a single official channel. Quick transparency neutralizes wild speculation far faster than public arguments.

Do these verification measures hurt creative spontaneity?

They can add friction, but design choices can limit intrusion; for example, automated hashing and lightweight receipts preserve workflow while creating a safety net. The aim is to protect the creative edge, not to bureaucratize it.

Related Coverage

Explore how provenance ledgers are changing independent game studios, why licensing disputes are the new battleground for AI art, and how cyberpunk fashion brands are using blockchain to prove authenticity. These adjacent stories explain the tools and market dynamics that will matter to creatives and small businesses next.

SOURCES: https://petapixel.com/2026/05/14/someone-shared-a-real-monet-painting-as-ai-and-asked-for-critiques/ https://startupfortune.com/a-real-monet-labeled-as-ai-exposed-the-new-trust-problem-online/ https://english.dotdotnews.com/a/202605/15/AP6a06f5b9e4b09ea233156aee.html https://www.theguardian.com/artanddesign/article/2024/may/08/fake-monet-and-renoir-on-ebay-among-counterfeits-identified-using-ai https://www.washingtonpost.com/technology/2025/08/17/ai-video-slop-creators/