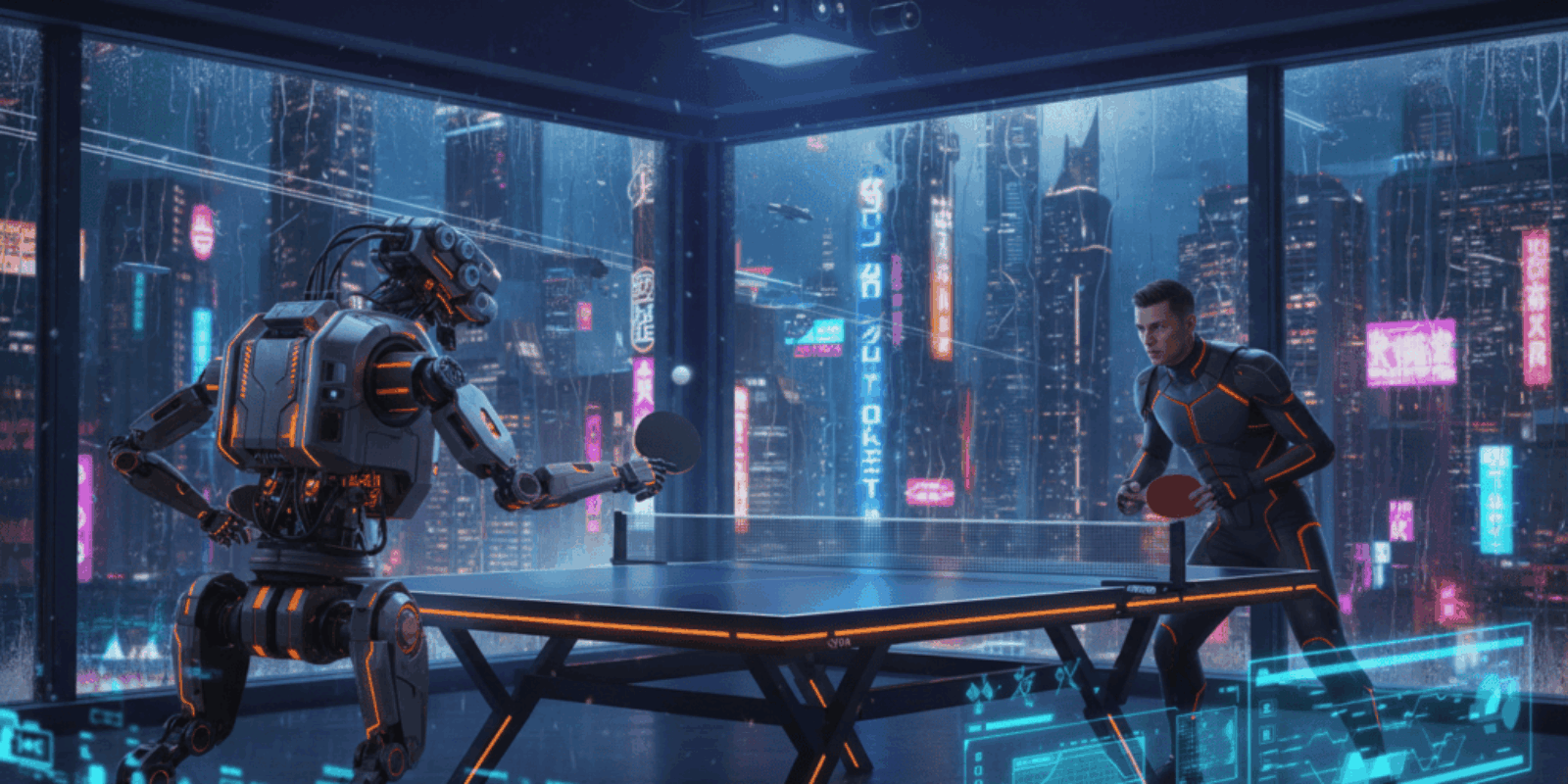

Robot at the Table: Sony’s Ace Turns Ping Pong into a Preview of Cyberpunk Reality

When a bright red paddle moves faster than a human eye can track, the room stops being a lab and starts feeling like a scene from a noir arcade.

Lights glint off a polished industrial arm as an elite player sizes it up. The human breathes, the ball arcs, and the robot replies with a trajectory that looks wrong and somehow inevitable. Spectators laugh nervously, then realize the laugh is at themselves for mistaking reflex for advantage.

Most reporters treat this as another milestone in embodied AI and an impressive engineering demo. That reading is true and comforting, but the overlooked story is how a machine that learns unorthodox, almost adversarial tactics in regulated play signals a new supply chain of capabilities for entertainment, security, and experiential hardware businesses. This is not just a faster arm; it is a laboratory for strategies that could be packaged, licensed, and monetized in ways the sports industry did not plan for.

Why the table is relevant to cyberpunk culture now

Cyberpunk has always mixed sleek tech and messy commerce. A robot that invents moves humans did not teach it maps neatly onto the genre’s obsession with emergent agency and corporate platforms that sell attention. Sony’s project tests how an autonomous agent performs within defined social rules while exploiting loopholes in strategy and human expectation. That duality is the story cyberpunk aficionados and designers care about: technology that obeys the rulebook but rewrites the game.

The mainstream facts, briefly

Sony AI’s Ace is described in a peer reviewed paper that documents matches played under standard International Table Tennis Federation conditions, including regular rackets and court dimensions. According to Nature, the research frames Ace as the first autonomous system to reach expert human performance in this kind of physical sport. Nature

What the company actually showed inside the demo room

Project Ace combines a multi joint industrial arm, high speed vision, and learned policies that adapt to spin and placement in real time. The team’s blog details latency metrics and hardware choices, noting end to end reaction times and the camera array that make the system possible. Sony AI

Competitors and why this moment arrived when it did

Other labs have chased robot table tennis as a benchmark for embodied intelligence, but Sony’s approach layered vision, simulation training, and industrial actuation into a cohesive system. The Guardian reported on the series of matches and quoted project leaders who stressed iterative improvements that moved Ace from a training rig to regulated play. The Guardian This work rides a wave of progress in perception chips, cheaper servomotors, and simulation-to-real transfer that converged in the last 24 months.

How Ace wins and what the numbers say

Ace scored multiple wins against elite players in staged trials spanning April 2025 to late 2025 and into early 2026. The machine acts with subhuman latency by design and can generate unusual spin and placement patterns humans rarely try repeatedly in competition, creating fatigue and strategic confusion. AP News noted the milestone and quoted Sony representatives calling the system a landmark for machines operating in rapidly changing environments. AP News

This robot does not simply react faster than a human, it invents angles humans did not expect and then forces them to adapt.

Why cyberpunk creators and experience designers should pay attention

Ace is a template for embodied agents that become content creators rather than mere executors. An arcade operator could license a version of this stack to run unpredictable opponents that keep players coming back, or theme parks could deploy adversarial animatronics that adapt to crowds in real time. The productization route is short: sensors, a control stack, and a content layer that encodes persona. TechRadar’s writeup captures the industry excitement and the sense that this is a turning point rather than a single stunt. TechRadar

A small operations aside for skeptics who fear hype and limited budgets: renting a demo unit for a three month pop up will cost less than developing the whole stack. No one is suggesting the average bar should buy an industrial robot, but contracting the experience is entirely plausible within two to four product cycles.

Practical implications for businesses with 5 to 50 employees

A boutique arcade with eight tables can model a deployment cost as hardware lease plus software fees. Assume a mid range robotic arm lease at 2,500 dollars per month, a vision and compute stack subscription at 1,200 dollars per month, and integration costs of 7,500 dollars one time. Over 24 months the total cost is 2,500 times 24 plus 1,200 times 24 plus 7,500 which equals 84,300 dollars. If each table charges 15 dollars per 20 minute session and operates at 40 percent capacity for 10 hours per day across 26 days per month, revenue approaches 15 dollars times 3 sessions per hour times 10 hours times 26 days times 0.4 times 24 months which equals about 112,320 dollars. That leaves a healthy margin after staffing and rent if the robot sells novelty and repeat play. These numbers are conservative and assume modest utilization; licensing and merchandising upsells increase upside.

Risks and open questions that stress test the claims

Hardware safety and liability remain the largest unknowns when industrial arms interact with paying customers in confined spaces. Regulatory gaps around embodied agents and public-facing robotics could force costly certifications. There is also a strategic risk: if the value is novelty, it decays fast and operators must refresh content regularly. Finally, the ethical question of emergent adversarial play needs governance; a machine that optimizes to exploit human weaknesses could be repurposed for surveillance or social engineering unless constraints are built in.

Why the underreported angle matters for venture and design teams

This is a productization story more than a research trophy. The same stack that discovers unorthodox moves in sport can discover unorthodox interaction patterns in retail, hospitality, and public spaces. That creates revenue paths that are not obvious from the first headlines and justifies investment in modular embodied AI that can be repurposed across industries. If investors treat Ace as a closed research artifact, they will miss the larger market for adaptive, rules aware agents.

Forward looking close

Expect rapid experimentation outside labs as studios and platform vendors try to convert spectacle into subscriptions and experiences into recurring revenue; that is what will determine whether this milestone becomes infrastructure or a one season novelty.

Key Takeaways

- Sony’s Ace proves an autonomous system can reach expert human performance in a physical sport and then monetize its unpredictability.

- Small experience companies can build business cases around leased embodied AI with clear math for revenue and break even.

- Safety, regulation, and ethical constraints are the soft costs every operator must budget for up front.

- The true value lies in the agent’s ability to invent interaction patterns that become repeatable content across venues.

Frequently Asked Questions

Can a small gaming bar afford a robot like Ace?

Yes, through leasing and software subscriptions the upfront capital burden falls dramatically. A realistic two year case shows subscription plus lease and modest integration can be paid off with conservative utilization and standard ticket pricing.

Will a robot like Ace replace human entertainers in arcades or venues?

Not entirely. Robots can augment and scale experiences but human staff provide hospitality, troubleshooting, and narrative that machines do not. Most operators will mix human and robotic offerings for the foreseeable future.

What are the safety requirements to deploy such a robot in public spaces?

Safety will require physical barriers, certified fail safe electronics, and liability insurance that covers mechanical failure and training accidents. Expect vendors to offer compliance packages as part of the integration.

How quickly will competitors copy Sony’s approach?

The core technologies are broadly understood, so expect competitive clones in 12 to 36 months from well funded labs and niche robotics firms. Differentiation will come from content, UX, and deployment scale.

Could this tech be used for surveillance or coercive systems?

The perception and rapid actuation capabilities create dual use risks, requiring governance and design limits. Vendors and regulators will need to define clear acceptable use boundaries.

Related Coverage

Readers may want to explore embodied AI in theme parks, the economics of experiential entertainment, and the ethics of adaptive agents for public interaction on The AI Era News. Each of those beats explains how a single research milestone becomes a supply chain, legal challenge, and design brief all at once.

SOURCES: https://www.nature.com/articles/s41586-026-10338-5, https://ai.sony/blog/inside-project-ace-discover-the-robot-athlete-that-competes-with-professional-table-tennis-players, https://apnews.com/article/995b239945e0dc8d7bea918a850969dc, https://www.theguardian.com/p/x4q25k, https://www.techradar.com/ai-platforms-assistants/it-totally-blew-my-mind-sonys-project-ace-robot-plays-ping-pong-better-than-the-pros-and-could-mark-a-major-robotics-turning-point