It Turns Out That Constantly Telling Workers They Are About to Be Replaced by AI Has Grim Psychological Effects

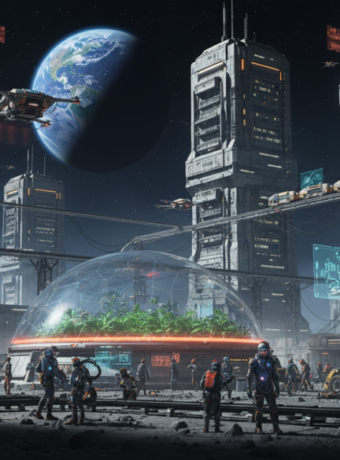

How a corporate pep talk about inevitable automation is reshaping the moods, art, and business decisions of cyberpunk creators and the niche industries that serve them.

A night‑shift developer at a small studio opens a company update and reads, in plain language, that the firm will only hire if teams can prove AI cannot do the work. She closes the laptop, thinks about her portfolio, and spends the next week prompting models instead of sketching. The room has never felt less like a creative studio and more like a waiting room; the neon here feels clinical.

Most observers treat these memos as blunt economic realism: companies are signaling a skills bar and pushing upskilling. That is true on the surface, but the underreported effect is cultural. For cyberpunk communities and the game design, art, and indie publishing businesses that orbit them, the steady drumbeat that humans are expendable by design corrodes psychological safety, reduces experimentation, and reshapes what creators are willing to risk.

Why the warning resonates harder in cyberpunk circles

Cyberpunk culture dramatizes a world where corporations and code squeeze the human out of systems. When workplace rhetoric echoes that fiction, the genre’s fans and professionals stop treating it as speculative allegory and start treating it as instruction. The aesthetic appetite for dystopia collides awkwardly with the lived experience of being told one’s work might be a legacy artifact.

From CEO memos to grassroots panic in studios

Top executives are increasingly explicit. Shopify’s CEO told teams that AI usage is now a baseline expectation and that new hires must be justified by proving AI cannot do the work. (fortune.com) That message is operationally clear and emotionally blunt; some managers use it to spur efficiency, others use it as a veiled replacement threat.

The corporate proof point that fuels the fear

Executives point to hard numbers when they justify headcount pauses. Klarna, for example, reported that its AI assistant handled the equivalent workload of hundreds of customer service agents, a change that materially reshaped hiring and compensation choices in the firm. (cnbc.com) That kind of claim is headline fodder and also a practical stick companies wave to enforce rapid adoption.

What the academic literature says about the mind under threat

Longstanding occupational research shows that perceived job insecurity reliably increases anxiety, depression, and cognitive load, and it suppresses creative risk taking in professional contexts. (pmc.ncbi.nlm.nih.gov) That is not an opinion. The physiology of threat taxes attention and narrows possibilities, which is the opposite of what creative, speculative genres like cyberpunk need to thrive.

How games, art, and fans are reacting now

A split is emerging across the games industry: large studios lean into generative tooling while many indie teams market being “AI free” as a badge of artistic authenticity. This movement has become visible enough that mainstream outlets have catalogued the backlash. (theverge.com) Fans who once adored cyberpunk irony now scan credits for “human made” like it is social proof. That is a market response and a survival strategy rolled into one. The joke here is that the genre invented bleak corporate futures and now has to buy a seat at the human table; funny, if it were not expensive.

The shadow of replaceability is not a plot device; it is a workplace toxin that rewires creativity.

The cost small cyberpunk studios must calculate

For a studio of 5 to 50 employees, the math is blunt. If a small studio pays an average fully loaded cost per employee of $80,000 per year and decides to automate art or QA tasks that consume 25 percent of team hours, potential annual labor savings could be $1,000 to $1,000,000 depending on scale and what is automated. But the counterfactual matters: if that automation reduces quality or angers a core audience, revenue fall could exceed cost savings. A single missed launch window or a poor review that reduces quarterly sales by 10 percent on a $2 million annual revenue run rate is a $200,000 hit—enough to wipe out any labor savings and then some. Small teams must model both sides: the efficiency gain and the reputational risk.

Practical steps for owners of 5 to 50 person companies

Designate AI as a tool, not a replacement. Set explicit rules for which tasks are safe to automate and which remain human. Invest 5 to 10 percent of payroll savings from automation into retraining and quality assurance. If a studio expects to save $200,000 by automating routine asset generation, earmark $10,000 to $20,000 for a quality lead and another $10,000 for artist reskilling workshops. Those are modest hedges that preserve craftsmanship and stop a morale drain from becoming a talent exodus. Managers who treat retraining as an afterthought will get retraining for morale instead of skills, which is slightly tragic and very avoidable.

The cost nobody is calculating

There is a less visible liability here: the erosion of trust. Repeated signaling that some roles are dispensable by algorithm reduces organizational commitment and drives people to hide knowledge, avoid hard problems, or jump ship. Those behaviors are slow leaks; they do damage in months and show up as churn, missed deadlines, and a diminished creative standard. The long tail of those losses is rarely reflected in quarterly accounting.

Risks and open questions that stress-test the claims

The central uncertainty is not whether AI improves productivity in narrow tasks but whether leaders can make a sustained cultural bargain: extract efficiency while protecting the human skills that attract customers. Legal risks around training data, evolving platform rules for AI content, and potential union actions in games and creative industries add another layer of volatility. Large claims about wholesale replacement often overlook repair costs and quality deficits that companies quietly reintroduce humans to solve.

Where this leaves cyberpunk culture in practice

Cyberpunk creators are at an inflection point: use AI to amplify scale or reject it to protect craft and audience trust. Both choices are defensible but carry different market consequences. The genre’s values complicate the corporate script that says “we will replace you if it is cheaper,” because its audience cares about authenticity in a way most enterprise customers do not.

The practical finish line

Leaders who demand reflexive AI adoption without rebuilding psychological safety will succeed at short-term cost cuts and fail at cultivating the kind of creative risk that keeps cyberpunk culture alive. Simple rule: automate the routine, invest in the human.

Key Takeaways

- Repeatedly telling workers they can be replaced by AI damages mental health and creativity and reduces long-term value.

- High‑profile corporate memos and efficiency claims give executives leverage but also create morale and reputation risk for creative industries.

- Small studios should run concrete tradeoff math and reinvest a slice of automation savings into quality and retraining.

- Cyberpunk communities are reacting by favoring human craftsmanship as a differentiator; audience trust can be worth more than short-term savings.

Frequently Asked Questions

How should a small game studio introduce AI without causing panic?

Explain precisely which tasks AI will handle and why, set quality gates, and frame AI as a time saver for repeatable work. Offer clear retraining pathways and make decisions transparent to reduce rumor and fear.

If a CEO says ‘prove AI cannot do the job’, what should managers document?

Document error rates, edge cases, customer experience impact, and the creative judgement calls where humans outperform AI. Numbers beat rhetoric in boardrooms and calm teams when shared openly.

What immediate signs show employees are suffering from replacement anxiety?

Look for reduced experimentation, increased silence in meetings, elevated turnover intent, and a spike in task requests that shift work away from creative risk toward safe maintenance.

Is it ever financially rational for a 10 person studio to replace artists with AI?

In rare cases where art is strictly template work and customers are indifferent, yes. Most cyberpunk projects rely on distinctive artistry, so the full financial model must include potential revenue loss from an alienated fan base.

How can a cyberpunk publisher signal authenticity to fans?

Transparency in credits, optional ‘AI used’ tags, and curated behind‑the‑scenes content showing human process reassure audiences and can become a marketing asset.

Related Coverage

Readers who liked this piece may want reporting on how creative unions are negotiating AI protections, case studies of studios that rebuilt hybrid workflows after bad AI rollouts, and primers on legal exposure for AI‑trained assets. Those stories illuminate the policy, legal, and operational threads that will shape cyberpunk culture in practice.

SOURCES: https://pmc.ncbi.nlm.nih.gov/articles/PMC7454321/ https://fortune.com/2025/04/08/shopify-ceo-ai-automation-no-new-hires-tech-jobs/ https://www.cnbc.com/2025/05/14/klarna-ceo-says-ai-helped-company-shrink-workforce-by-40percent.html https://link.springer.com/article/10.1007/s44362-025-00016-3 https://www.theverge.com/entertainment/827650/indie-developers-gen-ai-nexon-arc-raiders