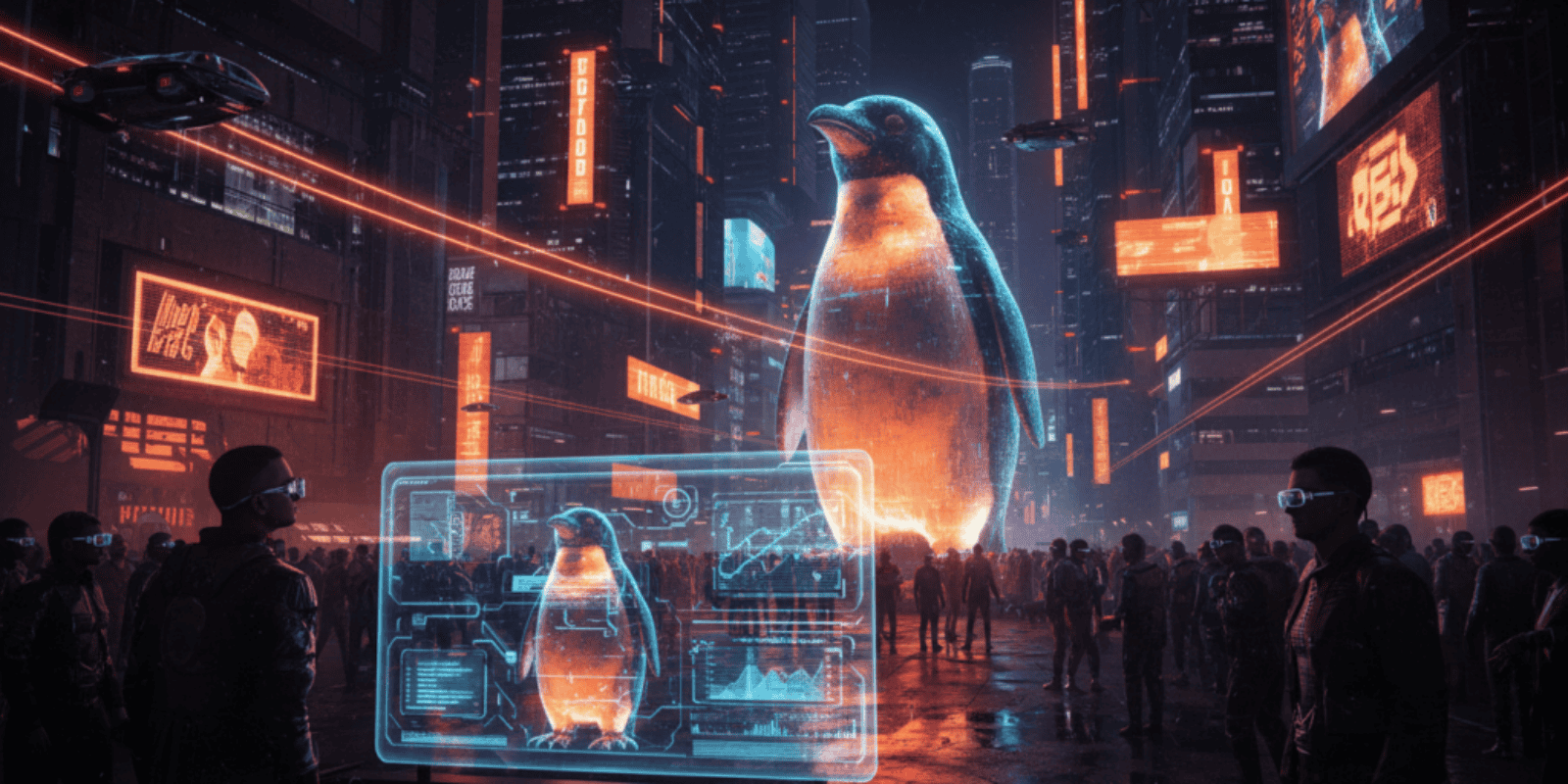

Fake AI Images of Penguin’s Big Penguin Statue Spark Panic Among AI Enthusiasts and Professionals

When a grainy, hyperreal photo of Penguin town’s Big Penguin appeared in developer Slack channels, people did not laugh. They hit search, pinged colleagues, and briefly treated the image as a live incident.

The obvious reading was that a prank had broken out: someone had used a consumer image generator to stage an absurd scene of the town’s mascot in distress and pushed it into tightly knit AI communities. That interpretation explains the immediate outrage and a few confused emergency checks. What matters more for the AI industry is the collateral damage to trust and tooling that followed, the kind of damage that silently raises operating costs for startups and compliance headaches for incumbents.

Why this one statue matters to people who build models

Public sculptures are trivial targets for visual manipulation because a single well framed photo is all an image model needs to build a believable alternate reality. The Big Penguin is a recognisable object, which makes manufactured variants feel more convincing than a fictional subject. That combination is exactly what bad actors and pranksters exploit when they want fast, viral believability without creating a full video deepfake.

Regulation, platform policy, and forensic tooling are racing to keep up, but real incidents already show how quickly fake images can cause genuine disruption. One dramatic precedent saw an AI-generated photo of an apparent explosion at the Pentagon briefly unsettle markets and newsrooms, proving that a single fabricated still image can cascade into real economic consequences. (itv.com)

What happened inside the AI community channels

Engineers in model ops channels noticed small artifacts at first: odd shadowing, duplicated patterning on the statue’s base, tiny finger errors on bystanders. The image nevertheless carried a metadata-free plausibility that made it shareable. Verification requests multiplied and legal teams were pinged, because the same Slack threads also contained links to fundraising pages and calls for local volunteers. The panic was not that the statue had been destroyed; the panic was that detection and provenance workflows were not fast enough to stop an operational scramble.

The pattern mirrors incidents where AI-generated images of a school fire triggered emergency responses and diverted local resources, illustrating how synthetic media creates real civic cost. (oecd.ai)

How the spread of a single image can cost real money

A modest scenario: a midmarket newsroom repurposes the Big Penguin image in a social post without verification. The post gets picked up by local influencers and then a commerce partner pulls sponsorship pending a review. That one chain reaction can cost tens of thousands of dollars in lost ad revenue and contract churn. Multiply this across outlets and platforms and the sums scale quickly for publishers that rely on fast traffic.

Enterprise risk assessments that ignore synthetic images are now incomplete. Analysts at security firms and research groups estimate that deepfake related losses and incident response work are in the tens of millions per quarter for companies managing high volumes of user content. That is the kind of baseline cost a platform must budget for not because fakes are rare but because they are cheap to produce. (infotech.com)

The human factor: credibility fatigue

Engineers and content moderators burn out fast when forced to adjudicate plausibility for millions of images a day. When communities repeatedly see convincing but false images, the natural reaction is either constant, expensive verification or resigned disbelief. Both outcomes degrade the product experience and increase headcount or tooling spend. The Penguin threads showed both reactions: frantic verification attempts followed by snarky defeat, which is a polite form of surrender. Someone in the channel quipped that the statue now has a better social life than some of the engineers, and the room laughed because that was the only coping mechanism left.

What vendors and platforms are doing right now

Platforms are pairing passive provenance markers with active community moderation, and commercial detection products are getting faster at flagging AI artifacts. Still, detection accuracy is imperfect and actioning flags at scale remains a policy and engineering challenge. The White House’s recent embrace of AI imagery for political messaging has complicated public trust economics, since official use of synthetic visuals lowers the signal-to-noise ratio for platform governance. (theguardian.com)

At the same time, local governments are starting to treat viral fake images as public-safety incidents. In several jurisdictions, authorities have publicly warned that AI-generated animal sightings and staged home-intruder photos have caused needless panic and wasted emergency resources. Those cases underline that the Big Penguin episode fits a global pattern where synthetic visuals create real-world response costs. (indianexpress.com)

Fake images are no longer theatrical stunts; they are operational hazards that change how companies plan, buy tools, and staff teams.

Practical implications for businesses with real numbers

A regional publisher that deals with community photos might add one content-moderation engineer for every 100,000 daily uploads. If that hire costs 150,000 US dollars a year fully loaded, and a conservative 10 percent of their moderation time goes to synthetic-image triage, the operational hit is 15,000 US dollars a year per 100,000 uploads. Multiply across five regional products and the line item becomes nontrivial.

For an AI startup selling an image API at scale, the math is different but equally painful: adding automated provenance checks increases latency and cloud costs. If provenance tagging adds 20 milliseconds per call and traffic is 50,000 calls per minute, the extra compute can raise monthly cloud bills by several thousand dollars, not counting the engineering time to integrate and certify the system. These are the small-line-item costs that add up into strategic budget decisions.

Risks and unanswered questions that keep CISOs awake

Detection arms races are inherently asymmetrical. Generators can iterate quicker than forensic models can learn to spot new artifacts. There is also the legal ambiguity about ownership and liability when a platform monetises an AI image that later sparks a real incident. Policy remedies are being discussed but not yet harmonised globally. Which company pays for emergency response when a fake image triggers a local dispatch is still an open question, and insurers are watching closely.

Another unresolved risk is the erosion of research incentives: if labs are constantly policing misuse instead of shipping features, innovation velocity can slow. That is a good problem for risk management but a bad one for product roadmaps. Also, rhetorical trolling at scale, including institutional trolling, changes reputational calculus for regulators and buyers in ways that are hard to quantify.

A forward-looking close with practical insight

AI teams should treat synthetic-image risk like any other operational hazard: quantify exposure, bake detection into the data pipeline, and set clear escalation rules with legal and public safety contacts. That approach costs money but prevents panic, and panic is the most expensive thing of all.

Key Takeaways

- Fake but plausible images can trigger real-world responses, and that operational cost must be budgeted by platforms and publishers.

- Detection tools help but do not eliminate the need for human verification and legal escalation paths.

- Public use of synthetic imagery by institutions complicates trust and raises the cost of moderation across the ecosystem.

- Simple math shows that modest moderation or latency additions rapidly become meaningful line items for high-volume services.

Frequently Asked Questions

How should a small publisher verify a suspicious image quickly?

Use reverse image search, check EXIF and provenance tags when available, and cross-reference official local sources. If the image could impact safety, reach out to local authorities before publishing and include a clear caveat for readers.

Will watermarking solve the problem of fake AI images?

Watermarking helps but is not a silver bullet because watermarks can be stripped or faked. Watermarks are most effective when combined with systemic provenance infrastructure and legal frameworks that penalise malicious removal.

Should companies block all AI-generated images on their platforms?

A blanket ban will harm legitimate creators and products; a risk calibrated approach that flags, rates, and optionally disallows certain high-risk classes is more sustainable. Policies should be transparent and enforceable.

What immediate tooling investments reduce exposure the most?

Invest in scalable provenance capture, automated artifact detection, and an escalation playbook linking product, legal, and public-safety teams. Those three investments together reduce both false positives and response time.

How will this affect consumer trust in AI-powered visual features?

Trust will fragment: some users will avoid synthetic content while others will embrace it. Companies that clearly label and provide provenance will maintain a premium level of trust with their users.

Related Coverage

Readers who followed this will want to read more about defenses against deepfake fraud, the evolving policy landscape for synthetic media, and how content provenance standards are being built into major cloud provider workflows. The AI Era News has ongoing reporting on verification infrastructure and platform governance that complements this story.

SOURCES: https://www.itv.com/news/2023-05-23/fake-ai-created-image-of-pentagon-explosion-sparks-brief-panic, https://oecd.ai/en/incidents/2025-11-10-3a70, https://indianexpress.com/article/cities/pune/ai-generated-leopard-scares-trigger-panic-pune-forest-dept-to-register-criminal-cases-10427697, https://www.theguardian.com/us-news/2026/jan/29/the-slopaganda-era-10-ai-images-posted-by-the-white-house-and-what-they-teach-us, https://www.infotech.com/research/ss/defend-against-deepfake-cyberattacks