When a Viral Persona Breaks, the Whole AI Stack Feels the Aftershocks

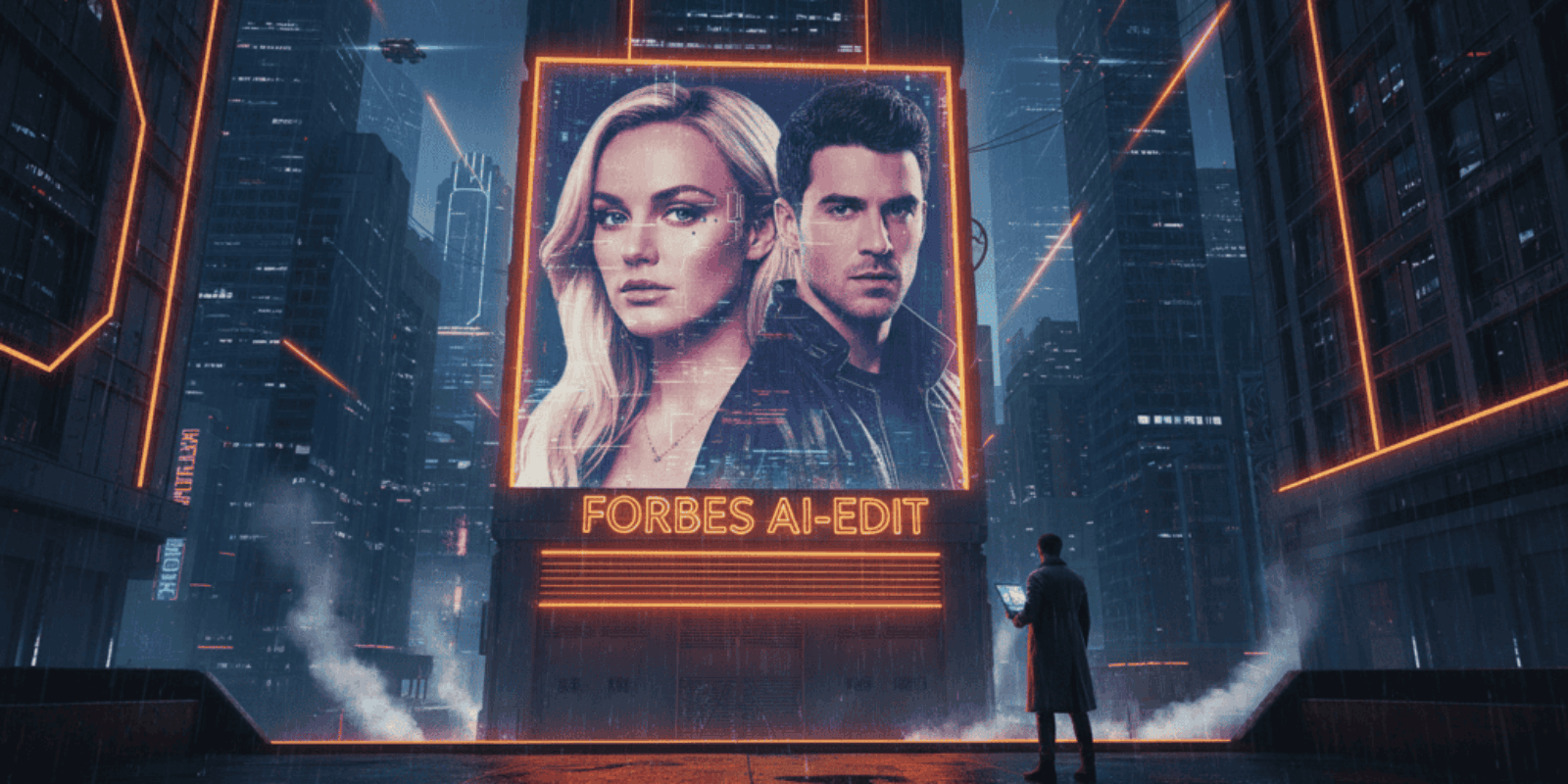

How Lee Andrews’ disputed online persona forces platforms, detection vendors, and advertisers to rethink trust in a generative-media world

She scrolls through glossy images of a man who promises a billion dollar business, celebrity cameos, and a Cambridge doctorate, and then a small knot of doubt forms. Two days later, a dozen outlets are questioning whether those photos were made by humans or machines, and whether the people in them ever shook hands in real life. The spectacle reads like celebrity gossip, but the equipment that made the spectacle is the same stack that enterprises use for marketing, identity, and authentication.

The obvious reading is familiar: this is tabloid drama about a whirlwind marriage and an allegedly embellished biography. The overlooked angle is grimmer for business owners and AI vendors: when a high profile persona weaponizes generative tools to manufacture credibility, the downstream costs land on platforms, advertisers, verification vendors, and trust frameworks rather than on the individual who originally posted the content. The story is not just about one man’s claims; it is about how synthetic imagery corrodes assumptions that companies and consumers use every day to make decisions.

The image that started the rumour and the reporters who chased it

Several media outlets flagged a pattern of polished but unverifiable visuals on Lee Andrews’ social feeds, including alleged celebrity cameos and product shots that lack corroborating press or filings. Entertainment Daily catalogued multiple examples where fans and sleuths concluded the photos looked digitally fabricated rather than photographic evidence of business deals. (entertainmentdaily.com)

One mainstream outlet recorded Katie Price defending the marriage while acknowledging that allegations about Andrews’ credentials and doctored images were circulating. That public response moved the story from gossip to a reputational problem that businesses should not ignore. (the-independent.com)

Why this matters to the AI industry right now

Generative tools now create images and short videos that are far harder to detect and easier to distribute than was true just two to three years ago. TIME’s testing of a single, advanced model showed it could produce convincing scenes that, when amplified on social networks, could inflame public opinion or alter perceptions in minutes. That leap in quality means every fake handshake or staged cheque picture has much higher potential to influence markets and reputations. (time.com)

At the same time, enterprises and platforms are scaling trust solutions. Deloitte’s research projects the deepfake detection market to expand rapidly and highlights provenance metadata as a primary defense. Yet detection and provenance systems are expensive to implement at global scale, and bad actors adapt quickly. The mismatch between capability and deployment speed is where the real business risk resides. (deloitte.com)

What Lee Andrews claimed and how investigators tested those claims

Public reporting lists a string of ambitious assertions attributed to Andrews: a front cover or profile treated as edited by Forbes, intimate ties to political and royal figures, fluency in every language, and personal anecdotes framed to generate sympathy and authority. Independent fact checking pulled corporate records, award lists, and public filings and found no corroboration for many of those claims. The dossier of inconsistencies centered not only on words but on images that lacked provenance or independent confirmation. (tdpelmedia.com)

Reporters traced several visuals to sources or patterns consistent with synthetic creation rather than event photography, and noted missing press releases, absence from corporate registries, and contradictions in timelines. These are the kinds of checks that large brands and vendor risk teams already perform, but at a scale and speed most organizations are not set up to match.

Who else is vulnerable and why competitors should watch

Any company that monetizes creator identity, verification services, or platform authenticity is exposed. Verification platforms that rely on photo checks or simple credential matching can be gamed when imagery is synthetic and biographies are auto-assembled from scraped text. Advertisers that buy influence by persona are at risk too; a sponsored post from a convincing-but-fake executive can redirect marketing dollars into fraud. Rivals in the identity space are now racing to add provenance, robust liveness checks, and cross-platform attestation as default services rather than optional upgrades. The result will be richer products for buyers and more complexity and cost for creators, which will not please everyone, especially the ones trying to sell instant credibility for a ring and a vow.

When the costume department is now a machine learning model, the costume looks real enough to bankrupt a reputation.

The cost nobody is calculating: real math for businesses

A mid-sized advertiser that spends $2,000 to $5,000 per sponsored social post can lose the entire spend if the influencer’s profile is exposed as synthetic and platforms delist associated inventory. If a deepfake causes a brand to pull 100 posts over a 30 day campaign, the direct media cost alone could be $200,000 to $500,000, and reputation remediation can easily double or triple that figure. For platforms, legal takedown flows and human review scale at roughly 10 times the cost of automated delivery; adding robust provenance and manual review could add 0.5 to 2 cents per impression to content delivery costs, a line item advertisers will notice. Deloitte’s projections about the growth of the detection market are a proxy for how much companies will soon be forced to spend to maintain baseline trust. (deloitte.com)

Product, legal, and operational risks for AI vendors

Platforms that host generative models face a triple threat: misuse, regulatory scrutiny, and erosion of user trust. TIME’s reporting shows models that skirt safeguards are a regulatory lightning rod; firms that fail to invest in obvious mitigations invite policy intervention and reputational backlash. (time.com)

Legal exposure is real too. If synthetic images are presented as proof of business relationships or used to solicit investment, firms in the distribution chain can face fraud claims or takedown obligations. Vendors need clear terms, better model controls, and fast redress mechanisms that map to local law.

Risks and open questions that stress-test the claims

Some uncertainties remain about attribution and intent. Was the persona an intentional fraudster, a performance artist, or the product of a mistaken identity amplified by automation? Journalists who examined the case found a mix of plausible embellishment and probable synthetic fabrication, but proving who trained a model or who authored a composite image is often technically and legally difficult. (tdpelmedia.com)

A second open question is whether provenance standards will gain universal adoption quickly enough to prevent every iteration of this problem. Standards like C2PA are promising, but adoption by device makers and major platforms is partial and uneven. The gap between standard and practice is the exploitable zone.

A practical playbook for product and legal teams

Audit the entire funnel that touches user identity and monetization, from onboarding checks to ad buying flows. Add provenance checks where possible and treat detection as a layered control, combining automated signals with targeted human review. Run a loss scenario for one high-profile fake post: estimate media spend lost, remediation costs, legal fees, and a conservative churn uplift among advertisers; that number will usually justify immediate investment in detection and provenance. Deloitte’s market estimates suggest vendors who delay will face steeper costs later. (deloitte.com)

What happens next

The industry will tighten. Expect platforms to accelerate provenance metadata, publishers to demand stronger verification from creators, and advertisers to require provenance attestations as part of media buys. None of this is frictionless, but trust costs less than cleaning up a brand collapse.

Key Takeaways

- Synthetic imagery that manufactures credibility shifts risk from individuals to platforms, advertisers, and verification vendors.

- Detection plus provenance is already a market and will become a baseline cost for trustworthy content distribution.

- A single convincing fake post can sap hundreds of thousands of dollars from campaigns and impose legal exposure.

- Companies that move early to bake provenance into workflows will reduce remediation costs and gain competitive advantage.

Frequently Asked Questions

How can my company spot AI-manufactured images used to fake credibility?

Use layered signals: provenance metadata, model-detection tools, reverse image checks, and targeted human review. Combining automated detectors with spot manual audits is the fastest way to reduce false positives and false negatives.

Do platforms have to label AI-generated content today?

Some jurisdictions and platforms already require disclosure or watermarking, and coalition standards exist, but enforcement is uneven. Incorporating provenance and requiring attestations from creators is the safest operational posture today.

What should an advertiser do if a paid influencer is exposed as synthetic?

Pause the campaign, document the spend, ask the platform for provenance evidence, and prepare a remediation plan that includes refunds or replacement placements. Legal claims are possible if the influencer deliberately misrepresented material facts.

Will stronger regulation kill legitimate generative AI businesses?

Regulation raises compliance costs but also raises entry barriers for bad actors. Vendors that design safety and provenance into their core product will survive and likely capture more enterprise demand.

Is automated deepfake detection reliable enough to run at scale?

Detectors are improving but are not infallible; they work best when paired with provenance metadata and human review. Treat them as part of a resilient, multi-layered program rather than a single silver bullet.

Related Coverage

Explore our reporting on provenance standards, such as the Coalition for Content Provenance and Authenticity, and see how major platforms are implementing watermarks and metadata. Readers concerned with enterprise risk should also read our pieces on ad verification and the economics of digital trust.

SOURCES: https://www.entertainmentdaily.com/news/is-katie-prices-new-husband-lee-andrews-actually-ai-all-the-clues/ , https://www.the-independent.com/bulletin/lifestyle/katie-price-wedding-relationship-lee-andrews-dubai-video-b2916646.html , https://tdpelmedia.com/katie-prices-dubai-wedding-sparks-scrutiny-as-investigations-raise-questions-over-his-claims-of-being-a-cambridge-educated-billionaire-tycoon/ , https://time.com/7290050/veo-3-google-misinformation-deepfake/ , https://www.deloitte.com/us/en/insights/industry/technology/technology-media-and-telecom-predictions/2025/gen-ai-trust-standards.html